Requirements for a Data Orchestrator, Lessons From Building a Package Intelligence Pipeline - Part 1

Introduction

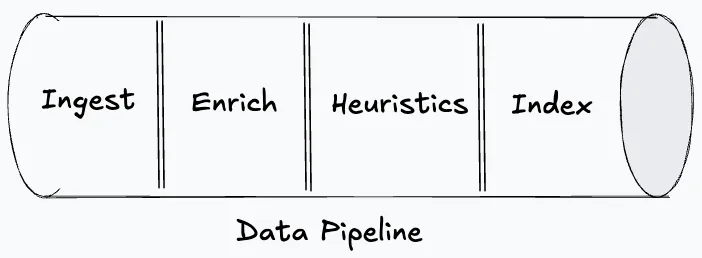

I’m building a Data Pipeline that ingests package data from nuget (and incrementally from other registries) to classify whether a package is an open-source package or not. The data pipeline functions to pull package data from the registry, enriching it with signals from multiple sources, applying heuristics and serving the results. Before I start building, I want to understand the requirements from the Data Orchestrator that will realize this pipeline. This is an ETL (Extract, Transform and Load) Pipeline, a well established pattern with many orchestrator options and the choice of orchestrator matters more than it might seem.

The data pipeline will have four phases.

- Ingestion from source: This phase will be responsible for ingesting raw data from the nuget registry. The raw data includes package metadata, versions, owners, repository URLs, etc.

- Enrichment: This phase will be responsible for enhancing the raw data by correlating the existing information with other sources. Particularly here the correlation happens by checking the repository activity, license files, contributor patterns from Github.

- Heuristics: This phase consumes the enriched data for each package and scores it on the likelihood of being open-source.

- Index: This phase will serve the categorized data after heuristics scoring where consumers can query the classification results.

Requirements from a Data Orchestrator

Data as first-class concept

It is very easy to envision orchestration as a set of related tasks run in a defined order by some automation. This picture is not wrong for Data Orchestration but is definitely incomplete. In Data Orchestration, we are more interested in data than the tasks/jobs which are used to bring in the data. For example: a team/organization would be more interested in the questions such as “Where is the data stored?” “How fresh is the data?” “Are the schemas proper?” “Are SLAs being met?” etc. This means that any data orchestrator that is picked should treat Data as a first-class concept and allow users to define datasets as named entities with schemas, freshness expectations and declared dependencies and let the orchestrator figure out how to materialize them.

Considering the nuget pipeline, I am more interested in producing a dataset called nuget_packages with a known schema, freshness expectations and downstream consumers. If the orchestrator only thinks in jobs, I will lose the ability to ask questions like “is this data fresh?” and can only ask “did the sync job run?”.

Incremental Processing & Partitioning

Incremental processing is the cornerstone for the efficient functioning of a data pipeline yet it introduces a layer of complexity such as state management, periodic reconciliation (data drifts in the long term due to quality of signals), schema evolution across historical data (addition or deletion of properties), backfills due to bugs, dependency coordination across incremental stages and so on. It’s a hard problem. No orchestrator can support all of this because some of these problems are not for the orchestrator to solve. So, picking an orchestrator that can provide out of the box support for some of these is an obvious win.

Considering the nuget pipeline, nuget has millions of packages and a full refresh of data is infeasible every time. Syncing only what’s changed since the last checkpoint is straightforward. But, incremental syncing carries its own state (a cursor or a watermark) that needs to be managed and the state can drift. Partitioning, let’s say, by day, will give the orchestrator the ability to handle each slice independently (track, retry and backfill). Without partitioning, I will have to write up a custom script to handle “just backfill last Tuesday’s data”. If enriched_packages is partitioned by day and depends on nuget_packages partitioned by day, the orchestrator needs to know that Tuesday’s enrichment depends on Tuesday’s ingestion. The orchestrator should let me declare partition schemes on datasets and handle tracking, retries and backfills per partition rather than forcing me to build that state management myself.

Dependency Graphs & Change Detection

Dependency Graphs and Change Detection together constitute Selective Rebuild capability. Dependency Graphs provide the order of execution of the tasks in the pipeline. Simply put, “what dataset should be materialized before a dataset X?”. Data pipelines can be complex and evolve over time. Having the capability in the data orchestrator to represent the data pipeline tasks as a dependency graph is foundational. Change Detection is the process of identifying the changes (a change can be as coarse as being based on materialization time or the dataset content based) that happened to the dataset in the pipeline and assessing the impact of that across the different regions of the data pipeline. If an upstream dataset that X depends on has changed, then X is stale. The orchestrator should support both of these concepts because without them, every upstream change triggers a full pipeline rebuild. With them, only the affected assets re-run.

Considering the nuget pipeline, I have 4 phases: Ingest → Enrich → Classify → Index. So, let’s say if I decided to increase signals at the enrich phase, the classification and output stages need to re-run. The Ingest phase can be skipped in this scenario. The orchestrator needs to know the graph to order and propagate the changes and also detect changes so as to skip unnecessary work.

Event-based Triggers

Scheduling data-ops is a big part of data pipeline which defines when a specific dataset needs to be triggered for materialization. Time-based schedulers alone aren’t enough for complex data pipelines. Event-based Triggers solve a lot of problems that time-based triggers can’t because they introduce a deterministic way to trigger materialization. Picking a data orchestrator that provides a way to assemble event-based triggers on datasets is important, reducing manual intervention and management of data-ops within the data pipeline.

Considering the nuget pipeline, nuget publishes a feed of recent package updates. Instead of polling on a schedule and hoping, I can watch the feed and trigger materialization when new packages appear. The trigger is the event (new data available), not the clock (run at 2am). For sources without a change feed, I fall back to scheduled polling, but the orchestrator should support both.

Data Quality Gates

A data pipeline can be marked succeeded while it had produced bad data such as the dataset is empty or has garbage values etc. This is as common as any other production defect in an application. But the impact of bad data within a data pipeline can be devastating because re-materialization comes with enormous costs depending on the volume and the data-source constraints. Bad data at any dataset in a data pipeline has the ability to pass on the bad data to the downstream datasets corrupting the whole pipeline if there are event-based automatic triggers enabled. Having a data quality gate is critical to isolate the problem at the origin, take necessary remediation steps and stop the cascading failures. A Data Orchestrator that provides native support to define Data Quality Gates is more valuable than one that doesn’t.

Considering the nuget pipeline, it is possible that the API returns a bad response or there is a bug in the ingestion which has led to a bad dataset. Without an effective quality gate, the bad dataset will be used downstream which might result in corrupt output. All of this can happen without the issue being visible until the last moment.

Pipeline-level Observability

Pipeline-level Observability ties together some of the concepts discussed already with some more under the umbrella. The logs, metrics and job status are not enough while observing a complex system such as a data pipeline because these tend to provide isolated visualization. The observability should provide a holistic picture and accumulate information across different layers of the data pipeline. Questions like, “did the job complete successfully?”, “is data fresh enough for downstream consumers of data?”, “when was the most recent materialization?” etc. The Data Orchestrator should have native support for Operational, Asset, Data Quality and Performance Observability.

Considering the nuget pipeline, I want to have observability on my datasets (when was it materialized, is the data fresh etc), on the pipeline (does my upstream dataset have new data, what’s the lineage of my data), SLAs, schedules and triggers etc. This is non-negotiable to maintain and debug the pipeline.

These are the requirements that I would hold any orchestrator to before building the pipeline. In the next part, I’ll compare tools like Dagster, Apache Airflow and Prefect against these requirements, then walk through the architecture of the nuget classification pipeline.